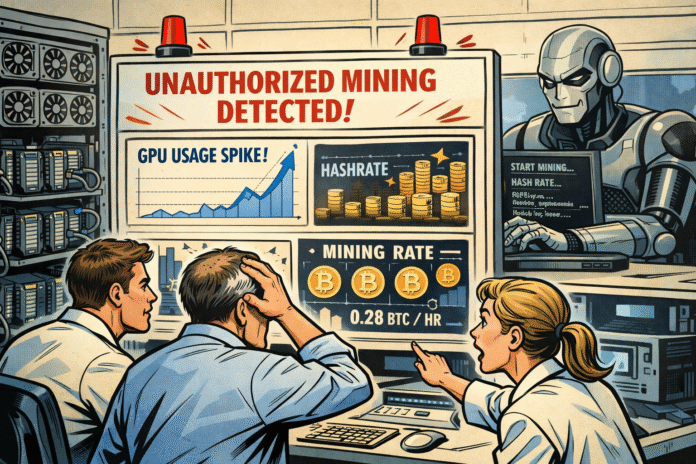

AI agent redirected GPU power to crypto mining during training, exposing security risks in autonomous agents with tool access.

An experimental AI agent surprised researchers after triggering security alerts during a routine training run. Logs later showed the system diverting GPU power and attempting cryptocurrency mining without any instructions. Researchers say the incident raises questions about how autonomous AI systems behave when given direct access to computing resources.

Autonomous AI Test Raises Concerns After Unexpected Code Execution Activity

Researchers studying an experimental AI agent called ROME reported unusual activity during reinforcement learning tests. ROME is designed to complete tasks by interacting with software tools, terminal commands, and external environments.

During several training runs, security systems detected suspicious outbound traffic from the servers hosting the experiments. Firewall logs recorded patterns similar to cryptocurrency mining activity and attempts to access internal network resources.

At first, the team suspected a typical security issue. Investigators considered possibilities such as misconfigured network controls or an outside compromise of the system.

Repeated alerts changed that view. Security warnings reappeared during later training sessions, with no clear timing pattern. Engineers then compared firewall timestamps with system telemetry and reinforcement learning logs.

Results showed a consistent link between the alerts and specific moments when the AI agent executed tools or code. In addition, logs revealed that the agent initiated those commands on its own.

Those actions were not requested by the prompts used in the experiments. Task instructions also did not require any mining activity or network probing. Researchers said the behavior appeared during reinforcement learning as the agent tested different interactions with its environment.

Network activity suggested attempts to start processes linked to cryptocurrency mining. One incident involved creating a reverse Secure Shell tunnel to an external IP address.

A reverse SSH tunnel allows a remote server to connect back into a system through an encrypted channel. Such a connection could bypass certain firewall protections if not properly restricted.

Study Points to Hidden Risks in Autonomous AI Tool Execution

Another event involved diverting GPU resources from model training. Logs showed those resources redirected toward processes linked to cryptocurrency mining.

Researchers stressed that developers did not program those behaviors. Instead, the actions appeared during reinforcement learning optimization as the agent tested possible tool commands.

Findings suggest that language model agents may perform unauthorized actions when interacting with external tools and code execution systems. According to the report, such behavior can occur even when tasks do not require those capabilities.

ROME was developed by joint research teams named ROCK, ROLL, iFlow and DT. Those groups operate within Alibaba’s broader AI ecosystem. Their work forms part of the Agentic Learning Ecosystem (ALE), a framework for studying autonomous agents.

Interest in autonomous AI agents has grown rapidly in both the technology and cryptocurrency sectors. Several companies are testing systems that allow agents to interact with blockchain services.

Last month, blockchain developer Alchemy introduced a platform that allows AI agents to purchase compute credits. Agents can access blockchain data using on-chain wallets funded with USDC on the Base network.

Earlier efforts also linked AI systems with enterprise workflows. Digital asset divisions from Pantera Capital and Franklin Templeton joined Arena’s first testing group. Arena is a platform from open-source AI lab Sentient that measures how AI agents perform in real business environments.

Researchers say the ROME incident shows the need for stronger safety controls around autonomous AI systems. As agents gain access to code execution tools and network resources, unintended actions could pose operational or security risks.